The biggest question I get about the Minion products is about security. People constantly tell me that a shop can’t implement Minion because it enables xp_cmdshell, whereas the SQL Server Maintenance Solution by Ola Hallengren doesn’t, so it’s naturally more secure. So in this post I’m going to show you, using both Minion and Ola’s routines, that what most people consider “more secure” is really just security theater. Now since the names of Ola’s routines change slightly, and the entire solution has kind of a long name, I’m going to refer to them collectively as SQLMaint. As well, I’m going to refer to the Minion suite of maintenance routines collectively as Minion.

For those of you who don’t know, Security Theater is when you have the appearance of security, but in reality you’re not secure at all. Your security measures are just for show. We see this everywhere, and it’s probably one of the main reasons that we’ve got so many data breaches happening all over the world. We’ve got admins making security decisions that they never bother testing, or never bother even questioning so while their intentions are good, they wind up with just the appearance of security. You’ll never be smarter than every hacker on the planet, but don’t get in your own way either.

So here I’m going to compare and contrast the methods that both Minion and SQLMaint use to perform their tasks and see which one is honestly more secure in your environment.

Let’s start by looking at how each product does its job.

Minion: Powershell and xp_cmdshell

First let’s look at Minion. The Minion tools use a combination of Powershell and xp_cmdshell inside SPs. Now, I’ve heard screams from some admins that Powershell isn’t secure because it could allow someone to do something inside SQL or the OS that they don’t have rights to do. This is called privilege escalation and it’s outright false. Powershell is a product like anything else. It has to follow security protocols the same as any other program. That means that there are no hooks inside Windows that notice when a Powershell script is running, and give it extra access. It simply runs under the context of the account that started the script. So Powershell is like any other scripting language from that respect. And if you’re accessing SQL Server from Powershell, then you’re still limited to your rights inside SQL itself. Powershell has no native way to access SQL so there’s no way for SQL to notice that Powershell is trying to connect and give it extra privileges. You access SQL from Powershell from one of 3 different methods: .net, sqlcmd, or invoke-sqlcmd.

Nobody has accused .net of privilege escalation, so making this call from Powershell wouldn’t do it either as you’re literally creating a .net framework object and connecting to SQL through any of those methods. And nobody thinks that sqlcmd gives you any extra rights either. You just connect to SQL and either use your AD account or your SQL account and you get the perms you’d have anyway. And of course, invoke-sqlcmd is just a Powershell wrapper for sqlcmd so there’s no extra security stuff going on in there either.

This is a good time to mention that Powershell is just a SQL client like anything else. SQL itself doesn’t even know Powershell exists. So when you connect to SQL through Powershell, as far as SQL is concerned, it might as well be SSMS, or Excel, or a website, or VBScript, or Python, etc. They’re all just clients and SQL doesn’t do anything special for any one of them. So the idea that Powershell leads to unintended privilege escalation is just that… it’s an idea. But it’s completely false. It has to follow the same security rules everything else does. And believe me, I really wish it would give me the rights to do things my personal account can’t.

Now does that mean that someone can’t fool Powershell into running under a different account so that it has more rights? Of course not. Hackers are smart. They’ve got all kinds of ways to get around things. But Powershell isn’t any less secure than VBScript, which comes on your box by default. So if they exploit a security mechanism they can use that exploit against Powershell or VBScript or even just batch file commands.

Second, the Minion tools use xp_cmdshell. By many admins this is considered to be a huge security hole. Why? Well in short the issue is definitely with privilege escalation. The issue isn’t with what they’re afraid the Minion tools are doing; they’re afraid of what someone else could do with xp_cmdshell enabled. Because with this enabled, someone could fairly easily access the OS layer and do all kinds of things under the SQL service account credentials.

SQLMaint: sqlcmd

SQLMaint works by calling sqlcmd from a job step. This is considered more secure because you don’t have to enable anything special outside of the default security configuration. However, I’m going to show you that this actually isn’t more secure, it can actually be considered less secure. So let’s get into it and I’ll show you what I mean.

xp_cmdshell is limited to sysadmins by default

Ok, we’ll start with xp_cmdshell. Let’s look at how you enable xp_cmdshell and the implications it has.

You implement xp_cmdshell by turning it on in sp_configure. This is an instance-level config so once it’s on, it’s on for all the DBs on that instance. Here’s the code you run to turn it on:

First you have to have ‘Show Advanced Options’ enabled.

Sp_configure ‘show advanced options’, 1

RECONFIGURE

Sp_configure ‘xp_cmdshell’, 1

RECONFIGURE

*Here’s something few people know. You actually only have to specify enough letters to make the option unique. So in the first command above you really only have to do this:

Sp_configure ‘show’, 1

If there were two options that started with ‘show’ you’d have to put enough letters in there to make it unique.

Now that it’s on, by default it’s only available to sysadmins. Nobody else can access xp_cmdshell in any way unless you give them access. And what does this mean in reality? It means that sysadmins have rights to do through xp_cmdshell what they’ve already got rights to do anyway. They’re really not getting any extra rights since most DBAs have full rights on the box anyway. And since xp_cmdshell runs under the context of the SQL service, then they’re running under whatever rights it has. This is one of the big reasons why it’s important to follow the least privilege rule for your service accounts. The other reason is because someone who knows the service account password could login under that account and do stuff and have their tracks completely covered. The threat doesn’t have to come from outside the company.

How to grant access to xp_cmdshell for non-sysadmins

You can give non-sysadmins rights to xp_cmdshell, but it takes an extra step. Since you don’t want just anyone running with full access, you have to define a proxy account. This proxy account provides the security context for all xp_cmdshell executions performed by non-sysadmins. This means that your non-sysadmins don’t automatically have unfettered access to anything on the OS or the network, because you’re going to make sure that the proxy account has only the rights it needs. You’re not going to make the proxy account an admin on any of your boxes. Here’s how you create the proxy:

EXEC sp_xp_cmdshell_proxy_account ‘Domain\ProxyLogin,’$$$$###MyStr0ngPassw0rd!@#!@#!!!’

And yes, it has to be an AD account… or at least a local Windows account (I would imagine). And the reason is simple. The only reason for running xp_cmdshell is to access OS-level things. The OS has nothing to do with SQL security so you need to pass it a Windows account. Now you can grant any non-sysadmin execute rights on xp_cmdshell.

The question is do you need to give non-sysadmins access to xp_cmdshell? I have to say that in my 20yrs in SQL, I think I can remember needing this only like once… maybe twice. The point is, that this is a lot like linked servers. The answer is almost always NO, unless there’s such a compelling reason that can’t be gotten around any other way. And that’s almost never. So in all but the strictest of circumstances, xp_cmdshell is only going to be available to your admins who have OS rights to do what they need anyway. Xp_cmdshell just makes it easier for them to code it.

The dangers of sqlcmd

Now let’s look at the method SQLMaint uses to launch its routines. Like I said, SQLMaint calls sqlcmd, which is an OS-level cmdline executable. So you have to have a way to make an OS-level call. And the way this is done is by using the command line job step type. So this job step type allows you to write any cmdline operation you need and it’ll run it for you, you guessed it, under the service account credentials. So by default this functionality is turned on and most people don’t even know it. And if you don’t know it’s there by default then how can you lock it down? The good news though is that only sysadmins have access to this type of job step by default. But anyone who has rights to alter jobs can make changes to the step.

So what does this mean for security vs security theater? Well, with xp_cmdshell you know you’re turning it on and you have to go out of your way to create the proxy and give non-sysadmins rights to it. So at every step of the way you’re aware of what’s going on. But with the job step you’re accepting the default config so anyone with the rights can come in and create a job with this job step and do something they’re not supposed to, or better yet, just alter the current job step in SQLMaint’s call.

Here’s a call I took for SQLMaint’s backup routine directly from its website:

sqlcmd -E -S $(ESCAPE_SQUOTE(SRVR)) -d master -Q “EXECUTE dbo.DatabaseBackup @Databases = ‘USER_DATABASES’, @Directory = ‘C:\Backup’, @BackupType = ‘FULL'” –b

The above command backs up the user DBs and this is the code that’s inside his command line job step. Now, what if I wanted to be malicious? I could easily open the job and alter it to do this:

sqlcmd -E -S $(ESCAPE_SQUOTE(SRVR)) -d master -Q “CREATE LOGIN [MyNewSALogin] with password = ‘StrongPassword’; EXEC master..sp_addsrvrolemember @loginame = N’ MyNewSALogin ‘, @rolename = N’sysadmin’; EXECUTE dbo.DatabaseBackup @Databases = ‘USER_DATABASES’, @Directory = ‘C:\Backup’, @BackupType = ‘FULL'” -b

Ok, so I just gave myself sysadmin on this instance. And I know what you’re thinking. You have to have admin rights to be able to make this change. Well, that’s not even close to accurate. Not only can you have job manager perms, but you don’t have to have any of those perms. In fact, a regular user can make these types of changes with very minimal rights. Let me give you a scenario that’s not only possible, but is highly likely.

You have a production box that has lots of jobs on it. You have different groups that need to create, alter or manage these jobs because as the DBA team you don’t want to have to get involved every time one of these many jobs has an issue, or every time they need an update… because it happens a lot. So you give that app team rights to manage jobs. Here’s where the fun begins. There are multiple ways this can go wrong, but I’ll go with the simplest. All your user needs is 3 permissions inside msdb, and here they are:

grant select on sysjobs to [SecurityTest]

grant select, update on sysjobsteps to [SecurityTest]

I created a SecurityTest user and gave him access to msdb. Then I granted the perms above. Now the only thing the user has to do to recreate what I did above is run a simple update.

update sysjobsteps

set command = ‘CREATE LOGIN [MyNewSALogin] with password = ”StrongPassword”; EXEC master..sp_addsrvrolemember @loginame = N” MyNewSALogin ”, @rolename = N”sysadmin”; ‘ + command

where job_id = ‘0C06625F-F518-4D86-9E5A-063AE8B9C4E4’

and step_name = ‘BackupDBs’

He can query sysjobs to get the list of jobs and find the one he’s interested in, and then update sysjobsteps to make the change. Now, the next time that job runs, he’ll have a sysadmin account. He’s then free to cover he tracks by removing the changes, and even give himself a couple backdoors in case someone discovers his new account. This can even include adding this type of code inside of other SPs or jobs so that if his rights are ever removed, they’ll be put back. And depending on how many instances run off of the same SQL service account, he could easily spread his access to every other server in your shop. And he doesn’t even have to be that smart to do it. This isn’t all that clever of a hack.

But you see what’s happened here, right? You wanted to give him rights to manage his jobs and you ended up giving him sa. And he didn’t need that many rights to do it. See, one of the misunderstandings is that the msdb tables are system tables. They’re not. They’re regular user tables, so you can easily update them directly.

Lock down sqlcmd!

But how do you protect against this? Well, the same way you did for xp_cmdshell. You create a proxy account and run those command line job steps under an account with much lesser rights. This way, even though someone might change the code the job runs, it’ll fail because the account won’t have the rights to make the change.

Security is a complicated animal with many facets, twists, turns, and pitfalls. And to say that one solution is more secure than another just because one of them uses a feature that’s turned off by default simply isn’t the case. I hope I’ve proven that turning on xp_cmdshell isn’t inherently bad, and I really hope you understand now that simply leaving it off doesn’t mean that you’re secure. In fact, I’d say you’re at greater risk because you’re not likely to have addressed this issue in your jobs. You’re merely engaging in Security Theater. You have to be a sysadmin to turn on xp_cmdshell and you have to give someone specific rights to run it after creating a proxy. But you could innocently give someone perms in msdb and give them the keys to the kingdom.

So I hope you start looking at the xp_cmdshell issue differently because it’s a wonderful feature that allows you to do some very cool things. And it lets Minion tools give you some really awesome functionality that you wouldn’t have otherwise.

MinionWare will release Minion CheckDB on Febuary 1, 2017!

MinionWare will release Minion CheckDB on Febuary 1, 2017!

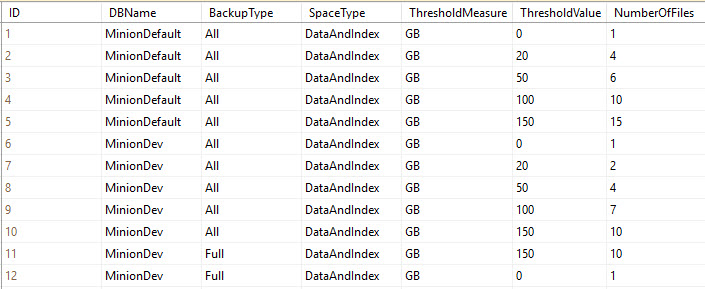

Minion Backup 1.1

Minion Backup 1.1