I read a blog today from my good friend @SirSQL (Nic Cain) where he was talking about how to change retry attempts on all the jobs on a server. Well, technically it’s the job steps that have the retry attempts, not the jobs themselves. And whenever I see something like this, my fabulously huge MCM brain tries to turn it into Powershell. So I put my fingers to the keys and like 10mins later I had a workable script. And while Nic’s solution is perfectly good T-SQL, it’s another one of those things that highlights the power of the shell because I only need 3 lines of code to do it.

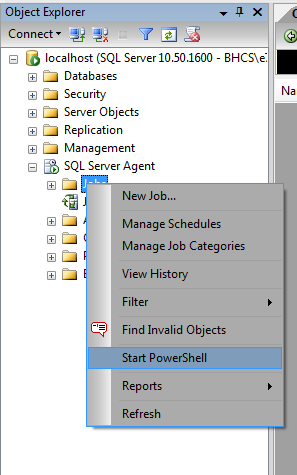

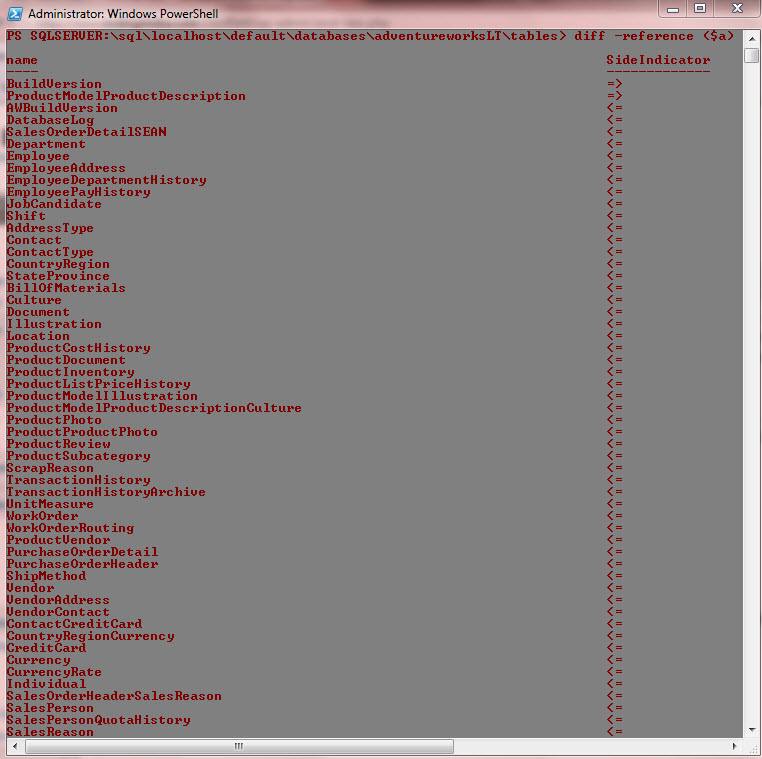

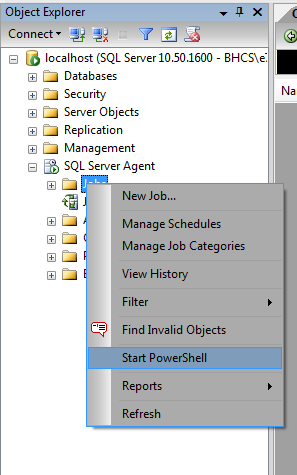

The easiest way to do this is through SSMS. Just start PS from the Jobs node.

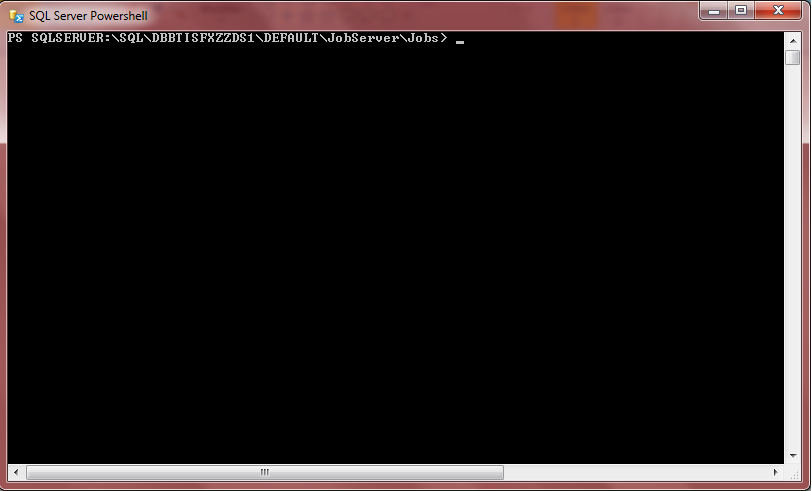

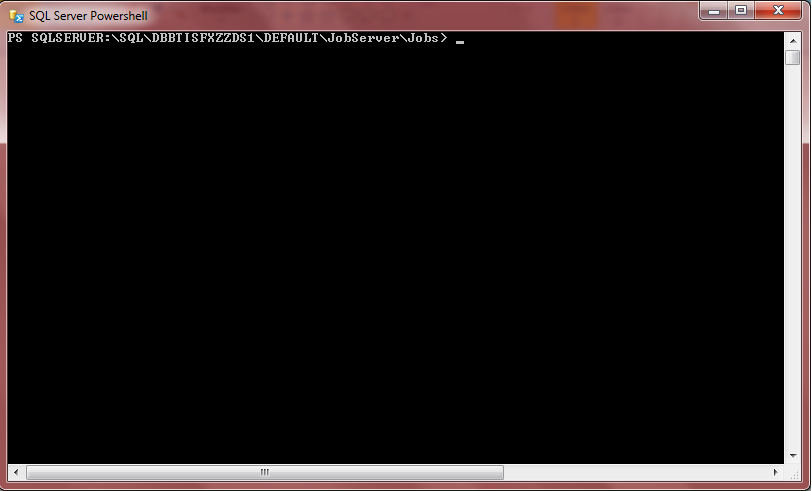

And that’ll give you a blank SQLPS window that’s already in the jobs node.

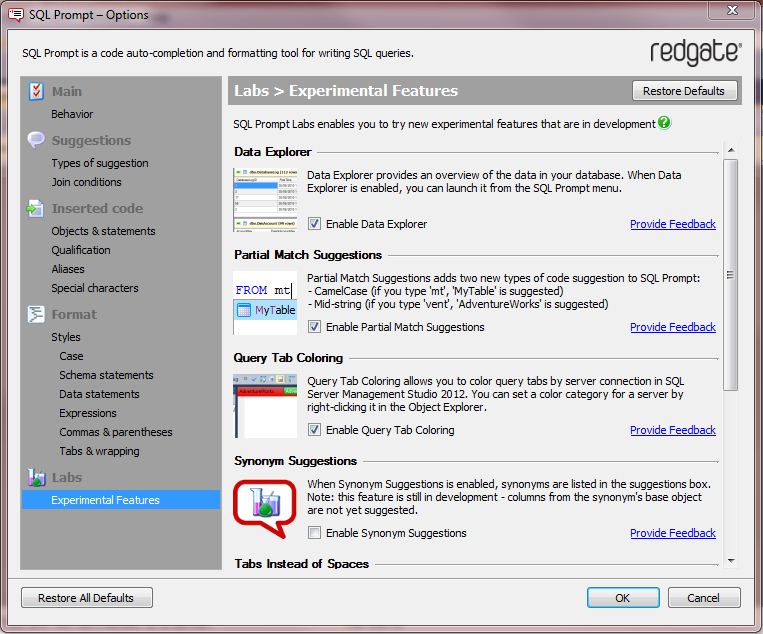

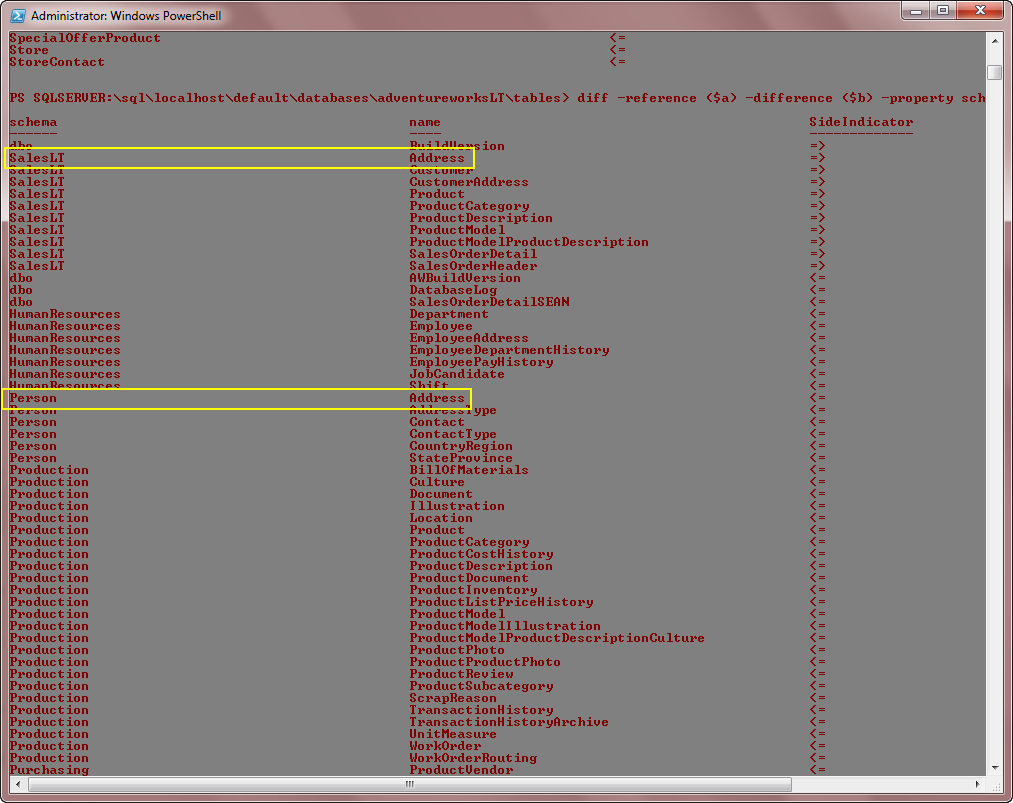

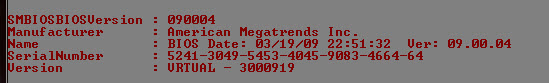

Since we’re in SQLPS we don’t need to load the assembly, but I’ll do it anyway to show you how cause you might not be in SQLPS when you do it. It won’t hurt anything to load it again. But that’s one of the things that SQLPS does for you; It loads these assemblies.

Here’s how you load the assembly and set a variable to the JobStep object type.

[reflection.assembly]::loadwithpartialname("microsoft.sqlserver.smo")

$js = new-object microsoft.sqlserver.management.smo.agent.jobstep

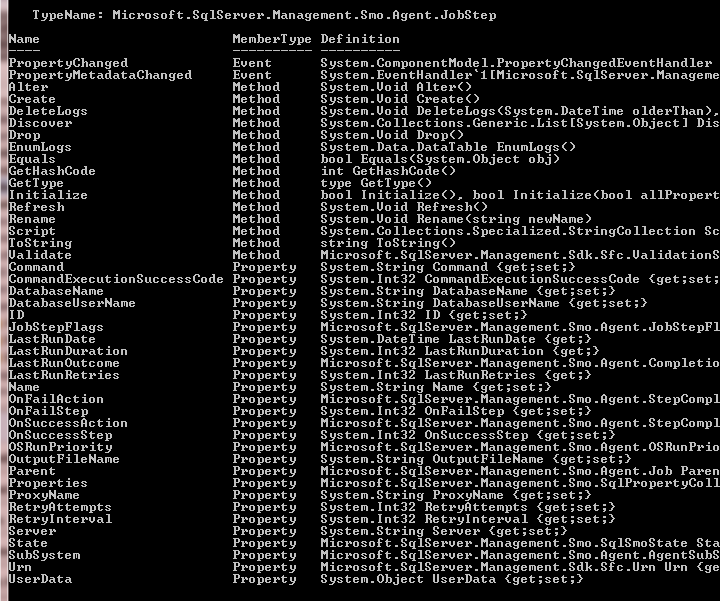

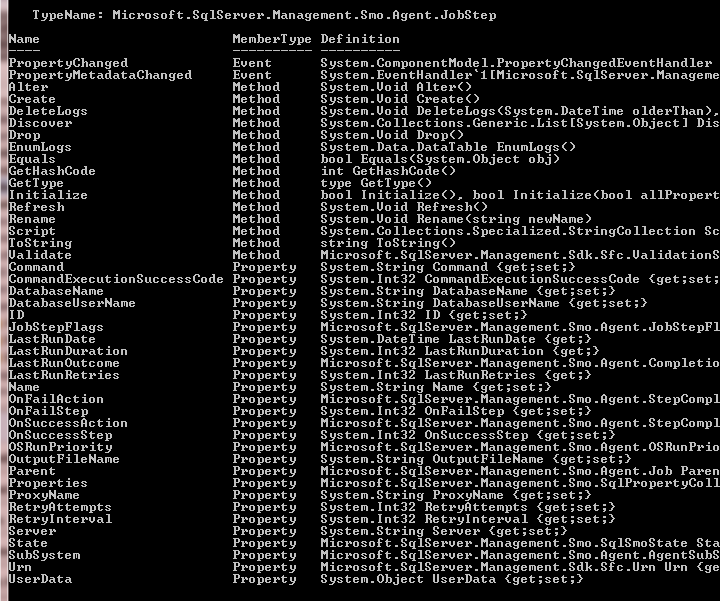

Now we have our new $js var (stands for JobStep) we can fill it with the steps of all the jobs on the server. However, first, let’s take a look at the members of our new var.

$js | gm

Now you’ll get a good listing of all the members so you’ll know what you can and can’t do with it.

Towards the bottom you’ll see the property we’re interested in here: RetryAttempts. Now we just have to go through all the steps and change the retries to what we want. Here I’ll change it to 5 just because it’s a nice round number. You’ll be surprised how easy it is to do this. I’ll go ahead and tack it onto the partial script above and this will become our entire script.

[reflection.assembly]::loadwithpartialname("microsoft.sqlserver.smo")

$js = new-object microsoft.sqlserver.management.smo.agent.jobstep

$js = dir | %{$_.enumjobstepsbyid()}

$js | %{$_.RetryAttempts = 5;$_.alter()}

Ok, that’s all we need to do to change the RetryAttempts property for all the jobs on the server. But we’re not done talking about this… not even by a longshot.

First, in line 4 notice I call the EnumJobStepsByID() method on each item of the dir. This is how I populate the $js var with all of the job steps. What this line says is list all of the jobs (using dir) and then for each one, get a list of its steps and put it in $js.

Line 5 runs through each of the job steps in $js and actually performs the work of setting the RetryAttempts to our new value. And remember, jobs have an Alter() method, and typically whenever something in PS has an alter method it likes you to use it. if you don’t the changes will take effect in your PS session only and will not be pushed to the server. So call the Alter() method.

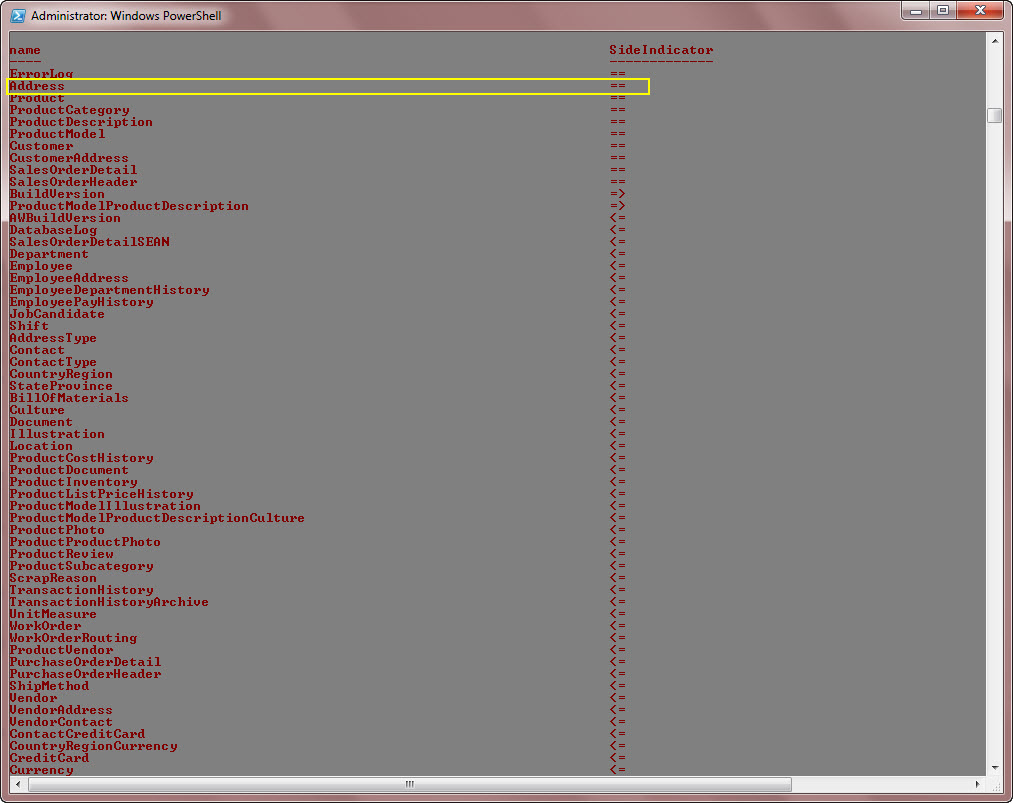

Now, I know what you’re saying… how would you do it for only some of the jobs? Because so far we’ve assumed that you want to apply the changes to every job on the server. Well, there are 2 ways to do that and they’re incredibly easy.

First, you can limit the data from the dir cmd in line 4. It could look like this:

[reflection.assembly]::loadwithpartialname("microsoft.sqlserver.smo")

$js = new-object microsoft.sqlserver.management.smo.agent.jobstep

$js = dir | ?{$_.Name -match "maint"} | %{$_.enumjobstepsbyid()}

$js | %{$_.RetryAttempts = 5;$_.alter()}

Take note of the new line 4. I’ve just added a where clause to the pipeline so now only jobs with the word “maint” in their names will be in the list.

The 2nd way is just as easy, but you do it at the job step level. if you remember from above when we looked at the methods for $js there was a property “Parent”. This is the parent job name for the step. So all you have to do is add the where clause to the $js instead and you’ll achieve the same thing.

[reflection.assembly]::loadwithpartialname("microsoft.sqlserver.smo")

$js = new-object microsoft.sqlserver.management.smo.agent.jobstep

$js = dir | %{$_.EnumJobStepsById()}

$js | ?{$_.Parent -match "maint"} | %{$_.RetryAttempts = 5;$_.alter()}

Now the new line 5 reflects our easy change.

There are so many uses for this code it’s incredible. There are plenty of properties to change and so many ways to limit the result set. Let’s say you have a maint routine on all of your servers and you want to alter the command that the 1st job step runs. That’s very easy. Instead of changing the RetryAttempts property, just change it to the Command property like this:

[reflection.assembly]::loadwithpartialname("microsoft.sqlserver.smo")

$js = new-object microsoft.sqlserver.management.smo.agent.jobstep

$js = dir | %{$_.enumjobstepsbyid()}

$js | ?{$_.Parent -match "maint"} | %{$_.Command = "My new code.";$_.alter()}

There’s nothing here Nic couldn’t change his script to do pretty easily, but this is much shorter and easier to read I think. It also has one huge advantage… I can run it on multiple servers without much effort. I’m not going to go down that road in this blog because I’ve posted many solutions with that code in it already so it wouldn’t be anything for you to add that to this script.

So anyway, thanks Nic for letting me use you as the base for this post. Your solution is fine, I just prefer the look and feel of 4 lines of code.

I’ve also got a companion video for this post:

http://midnightdba.itbookworm.com/VidPages/PowershellChangeJobStepProperties/PowershellChangeJobStepProperties.aspx