Hey guys, I posted a new video. It’s based on the blog post I did the other day. I got a couple requests to make it into a video so here it is.

Category Archives: Time-savers

Why won’t the log shrink?

I know that everybody always says you shouldn’t shrink your log files, but lets face it, sometimes it really is necessary. Maybe you had a big op that was out of the norm and it grew your log bigger than it usually runs, or maybe (and more common) your backups were failing for a few days before you noticed and the log is now huge and you need to get it back down to a more manageable size. For whatever reason, you need to shrink your log. Now, I’m gonna show you the T-SQL way because it’s what I’ve got handy and I’ve used this script for years.

So here’s the script and then we’ll discuss a couple things… because the script isn’t the meat of this post. But this one will do all DBs on the server, so just use the logic if you don’t like the cursor. And it has no effect on DBs that can’t have their logs shrunk so don’t worry about causing damage.

Declare @curDBName sysname

OK, so that’s fairly standard admin stuff. Now, If you wanna check that you’ve had the effect you expected, you can run this command both before and after to make sure you’ve shrunk it.

Declare DBName Cursor For

Select Name from sysDatabases

Open DBName

Fetch Next From DBName into @curDBName

while @@Fetch_Status = 0

Begin

DBCC ShrinkDataBase (@curDBName , TRUNCATEONLY)

Fetch Next From DBName into @curDBName

END

Close DBName

DeAllocate DBName

DBCC SQLPERF(LOGSPACE)

And what you’re going to get from that is a result set that gives you the DBName, the log file size, and the % used. What you want to look at is the log file size and the %Used. If the size of the file is really big and the %Used is really small, that means your log file is a lot bigger than it needs to be. So an example would be you’ve got a log file that’s 52000MB and 3% used. That’s a lot of empty space. So you’ve definitely got to use the above cmd to shrink it.

But what happens if you shrink it with that cmd and it doesn’t shrink? Or maybe it just shrinks a little bit? Well, now you’ve got to troubleshoot why it’s not shrinking. This is one of my standard interview questions and you’d be surprised how many people get it wrong. As a matter of fact, I’ve never had anybody get it right. So here’s what you do… pick up a book and become a DBA. Ok, seriously though, it’s extremely easy.

Run this query in master to see what your log may be waiting on to truncate.

SELECT Name, log_reuse_wait_desc FROM sys.databases

What you’ll get here is a result that tells you what the log is waiting for before it can reuse the VLFs, and in this case, kill them so you can shrink the file. There are any number of things that can show up here as the reason. You could be waiting on a long transaction, or on a log backup, or on replication, etc. So next time you have a problem getting a log to shrink, come here first and find out what it’s waiting for before you go guessing what the problem might be.

That’s all I’ve got.

[EDIT]

Ok, I got a comment below that says BOL states that TRUNCATEONLY only works for data files. I checked and he’s right, BOL does say that. However, I’ve been using this method for years and I just ran a test on it individually using the code I pasted out of this post so BOL is wrong on this one. I ran my test on SQL2K8 but I don’t see any reason why it would change significantly with a lower version.

Audit service accounts with Powershell

I thought I’d kick off the week with a useful little PS script I wrote last week. It happens from time to time where you need to find out what AD accts your SQL boxes are running on. It could be that you simply want to see how diverse they are or maybe you want to change the password of an acct and you want to see what the impact will be. All the same, it can be useful to audit the startup accts for your SQL services.

So here’s a little script you can use to get that done. This again is part of a much larger process I have, but I’ve pulled it out and simplified it to its base componets for you. I’ve also added the DB side of the process for completion.

Here’s the PS piece:

$Server = ‘localhost’

$StatsDB = ‘DBStats’

$SourceSQLScript1 = “\\Server1\F$\SQLServerDBA\Scripts\Collector\SQLQueries\AllSQLBoxes.txt”;

$SqlCmd1 = Invoke-Sqlcmd -ServerInstance $Server -Database $StatsDB -inputfile $SourceSQLScript1

$SqlCmd1 | % {

$_.ServerName; ####Print ServerName

[System.Int32]$ID = $_.InstanceID;

[System.String]$ServerName = $_.ServerName;

$ExecutionDateTime = Get-Date -Format “%M/%d/%y %H:m:ss”;

$a = Get-wmiobject win32_service -ComputerName $ServerName -filter “DisplayName like ‘%sql%'” -ErrorVariable err -ErrorAction SilentlyContinue

$a | % { $DisplayName = $_.DisplayName;

$StartName = $_.StartName;

Invoke-Sqlcmd -ServerInstance $Server -Database $StatsDB -Query “Collector.spServiceAcctInsert ‘$ExecutionDateTime’,’$ID’,’$DisplayName’,’$StartName'”

}

}

Most of this is the same as the other scripts I’ve posted so I’m not really gonna go into every line like I have in the past. I am going to explain the params for the invoke-sqlcmd line though. In this line I’m just calling an SP that I’ve put in place to log these details to a table and you can use as much or as little of this as you wish. The $ID param is the ID of the server. In my complete solution I have a server table and I log things to other tables using the ServerID that comes from that table. You’re free however to just capture the ServerName here instead. But you notice that in the query, I get the ID and the ServerName and I use those in the script. I’m just presenting you with how I do it and you can change it.

Here’s the SP that I use in the DB that gets called by this script. It’s very simple.

CREATE procedure [Collector].[spServiceAcctInsert]

@ExecutionDateTime datetime,

@InstanceID int,

@ServiceName varchar(50),

@StartName varchar(50)

AS

Insert dbo.ServiceAcct

Select

@ExecutionDateTime,

@InstanceID,

@ServiceName,

@StartName

And now here’s the table that you’re logging to:

CREATE TABLE [dbo].[ServiceAcct](

[ID] [int] IDENTITY(1,1) NOT NULL,

[ExecutionDateTime] [datetime] NULL,

[InstanceID] [int] NULL,

[ServiceName] [varchar](50) NULL,

[StartName] [varchar](50) NULL

)

And that’s all there is to it. From here, now that you’ve got all of your service accts and the AD accts associated with them, you can easily query this table to see the impact of changing a password or just see how bad your security is. This is useful info to have. You don’t have to run this kind of thing often though. In fact, it’s not a query that I have scheduled. I typically just run it when I want to know something specific, but you may have a reason to schedule it.

OK guys, that’s all I’ve got on that. Enjoy powershelling.

Today’s Powershell Fun

I’m having big fun with PS today because I’m installing several new R2 boxes on Windows Server R2. Where does PS come into play you ask? Well, good question… I only wish I had a good answer. Ok, I do.

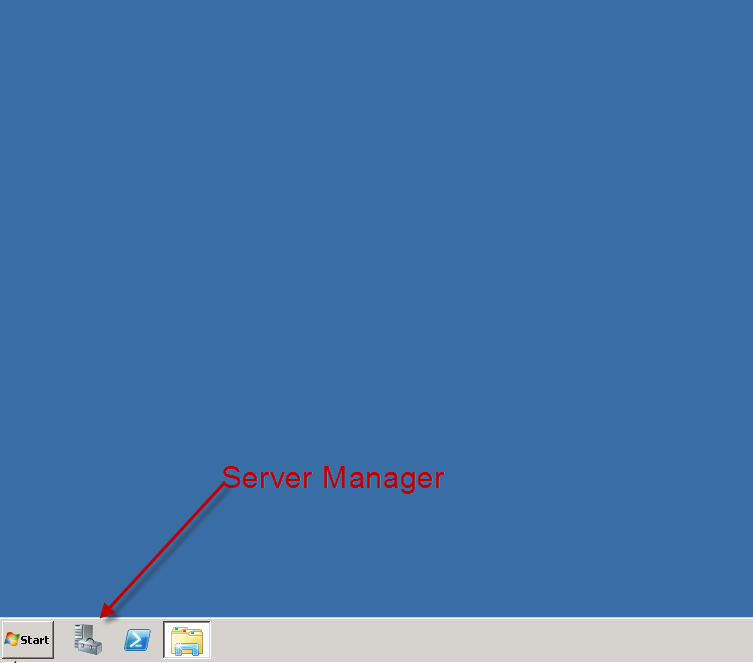

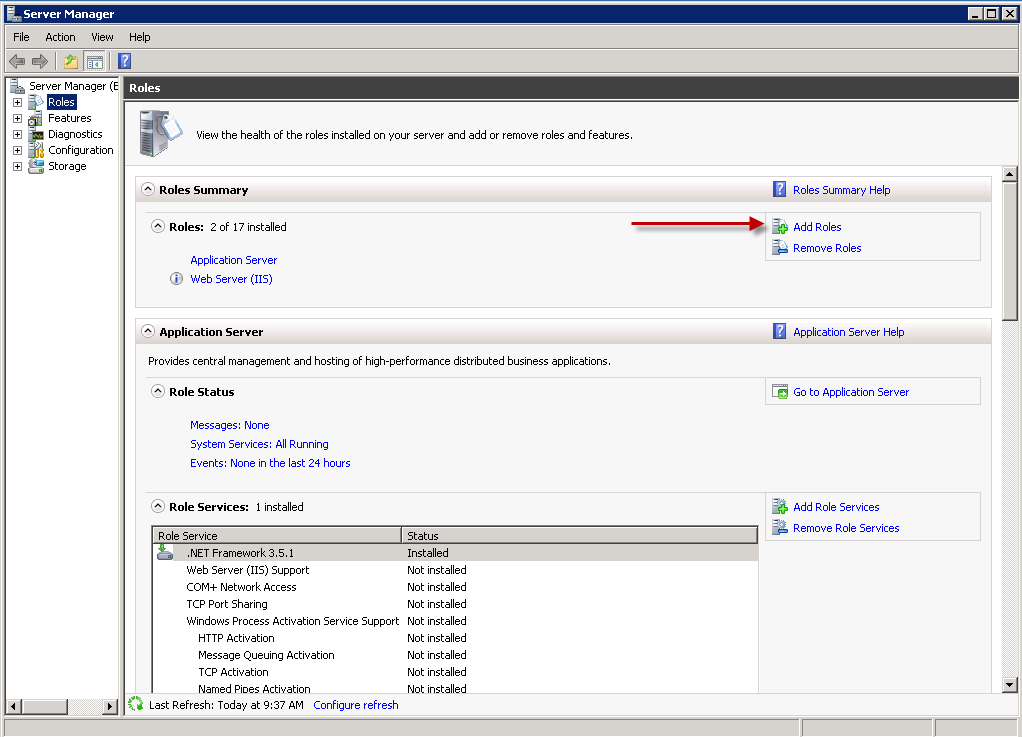

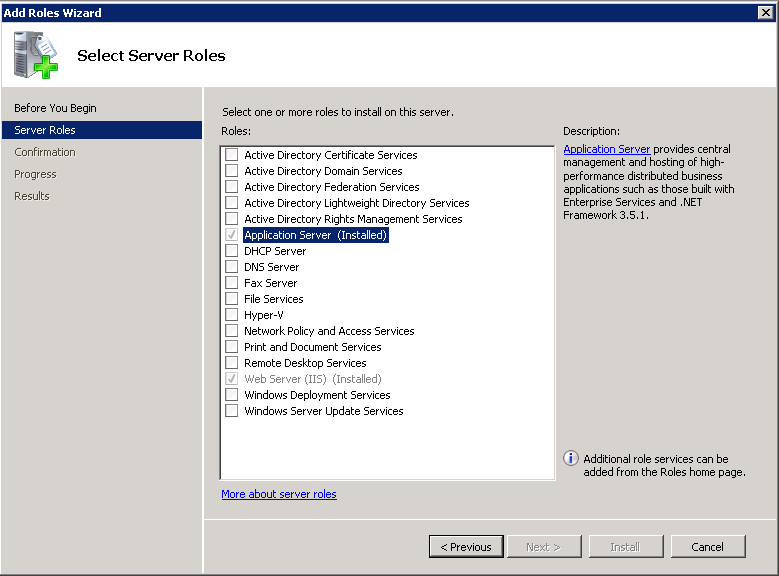

In order to install SQL Server R2 on Windows 2008 R2 you have to first install the .NET Framework. However, the SQL installer doesn’t do it for you. It just tells you that you have to install it. So the normal way is to go into the server manager and then to ‘roles’ and then ‘add a role’. Once you’re in the add role wizard choose ‘Application Server’. Here are some brief screens of the process.

As you can see I’ve already installed it on this server, but it’s pretty easy to figure out from here. Just install the .NET Framework (it’ll actually already be checked for you) and you’re golden. Now, that takes too much time for me, especially when I’m installing several boxes at once. So what I like to do is call on powershell for this task. I’m going to take some extra time here to show you a couple things, but the process once you get it down is much faster than the wizard.

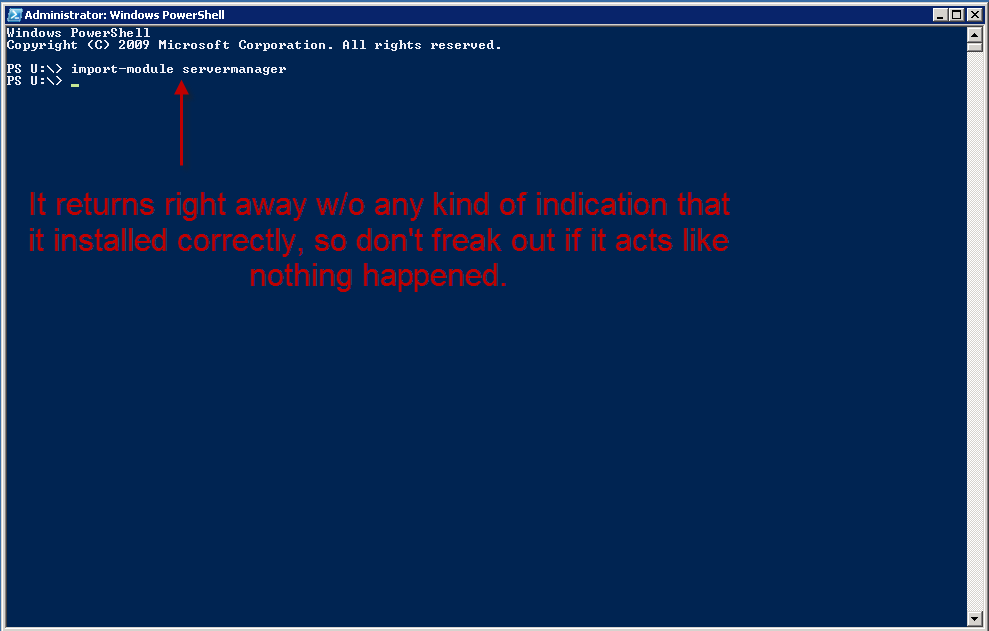

First of all, you have to load the windows modules into your PS session. To do it manually, you can open PS and type the following command:

> Import-Module ServerManager

Here’s a screen:

However, now you’ll be able to run the next command.

> Get-WindowsFeature

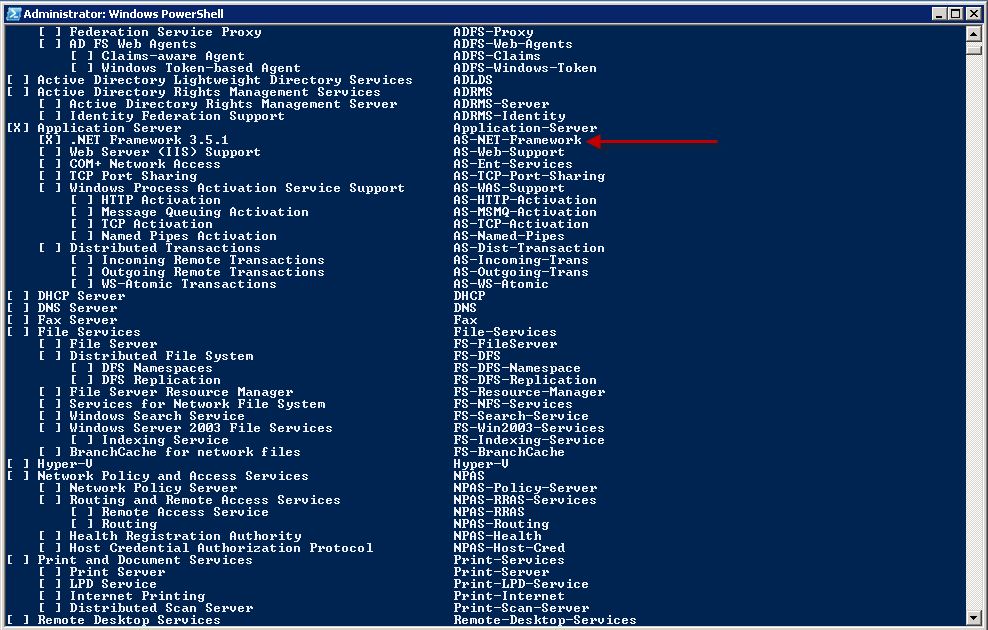

This will show you all the features available and which ones are already installed. Here’s a screen of the output.

And of course, you’ll notice on mine, it’s already installed, but it’ll be unchecked on yours. OK, now you’re ready to do the install itself.

And for that, you need yet another command, but don’t worry, it’s easy.

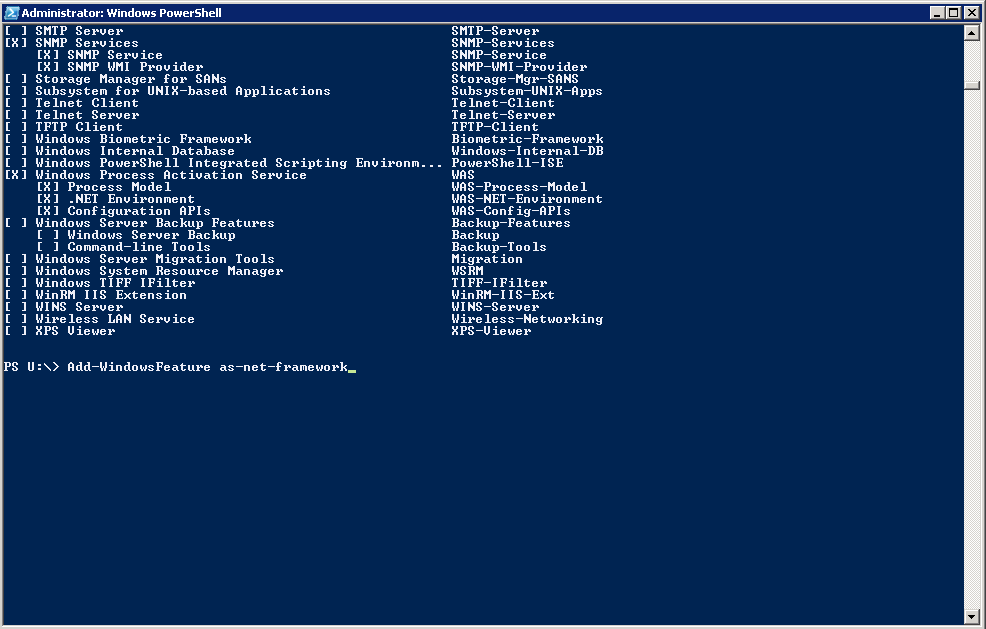

> Add-WindowsFeature AS-NET-Framework

Notice I put an arror by the name of the feature above that you’ll feed to the Add-WindowsFeature cmdlet. So in case you didn’t catch it, the syntax is simply the cmdlet and the name of the feature you want to install.

Here’s a screen of the line as it’ll look when you type it.

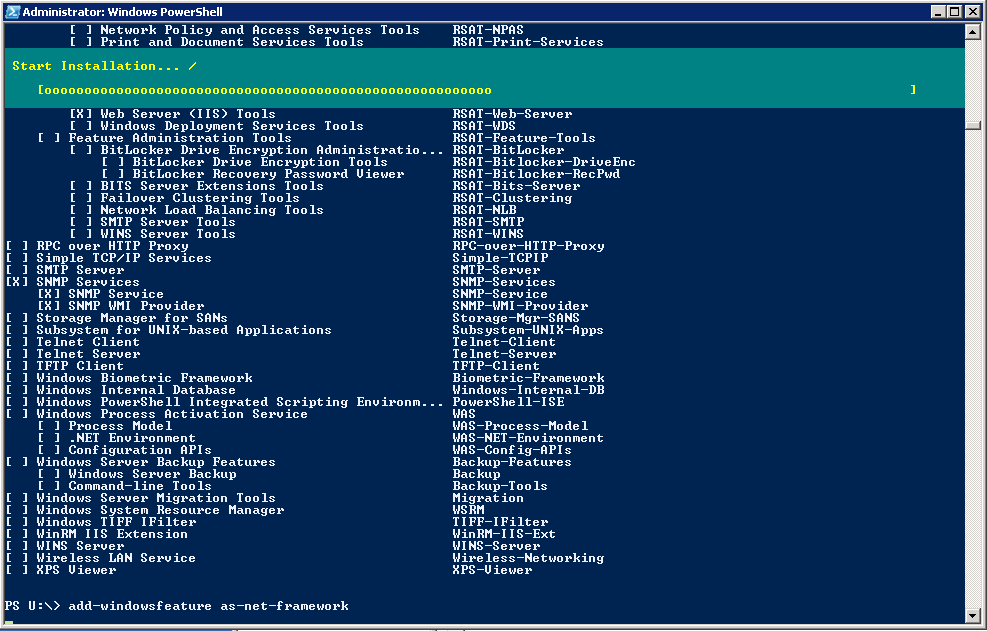

And here’s what it looks like while it’s installing:

And that’s it guys. The install is actually much quicker from the cmdline and you don’t have to worry about making a mistake through the GUI. And of course you don’t have to go through all these steps every time. Why not write a short script to do it for you? It would look like this:

Import-Module ServerManager

Add-WindowsFeature AS-NET-Framework

That’s it. That’s all it takes to install it from a script. Now you can save that off to the network somewhere and just use it whenever you like.

OK, that’s all I’ve got.

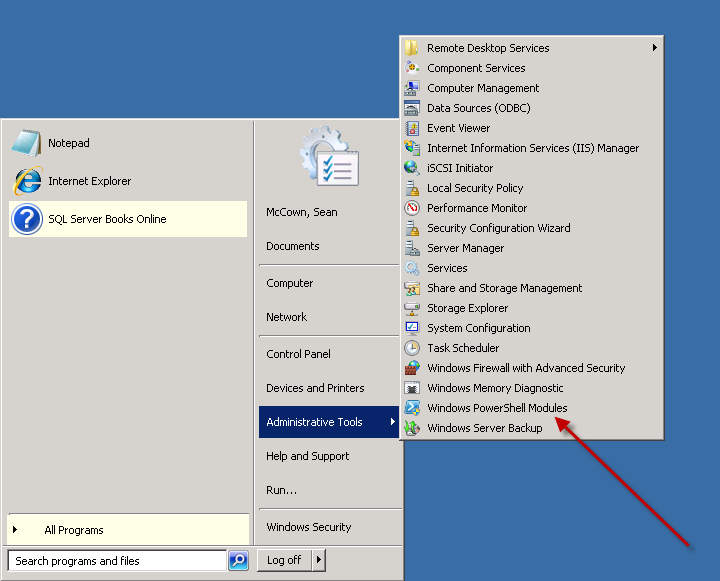

Oh y, one more thing… you don’t have to import the servermanager module every time you run this. You can use the PS shell that loads all the windows modules for you. You can find it here:

Ok, THAT’S all I’ve got.

Powershell to recycle error log

Here’s a really good one. I noticed that very few of my boxes had a job to recycle the sql error logs. This is important because unless specifically done, the error log will only recycle when the service is burped in some way. For those of you who aren’t up on the lingo, recycling the error log is when you close down one log and create a new one. The error logs are just txt files kept on the server. So recycling creates a new physical txt file. And the reason you care about this is because some servers don’t get bounced very often at all and the files can get quite large. I have one server with a 40GB error log. And when I try to open it, it sits there for like 2hrs and then finally dies cause there aren’t enough system resources to deal with a file that big. So you can see why you want to keep the size of the files down.

And like I said, the error log only recycles when you burp the service or do it manually. And the way you do it manually is by running this code:

master.dbo.sp_cycle_errorlog

But doing it manually isn’t good enough because it has to be done on a regular basis. I prefer to recycle my logs every day. It’s a good generic schedule and honestly I don’t ever remember needing anything more granular. OK, so a new log every day is what we’re after. That means we need to put this code in a job and have it run every day at the same time.

So ok, that set the stage for what we’re wanting to do here. Now we can get into what we actually wanna talk about… and that’s how we push our new job to all of our boxes. Now, I’m going to do this a specific way and I’m doing it like this for a reason. I’ll explain once I’m done.

Here are the steps I want you to take to prep for this venture.

1. Create a job to occur every day (Midnight is preferable) and include the above code in the job step.

2. Be sure to set the job owner to sa.

3. Script the job and save it as a file somewhere.

4. You’ll have to script the job for different versions of SQL. SQL2K doesn’t have job schedules so the script blows up. So at least have one for SQL2k and below, and one for 2K5 and above.

Now here’s the powershell script you’ll run.

$SourceSQLScript1 = “\\Server01\F$\SQLServerDBA\Scripts\Collector\SQLQueries\ServerListAllSQL2000.txt”;

$SqlCmd1 = Invoke-Sqlcmd -ServerInstance $Server -Database $StatsDB -inputfile $SourceSQLScript1

$SqlCmd1 | % {

[System.String]$ServerName = $_.ServerName;

$ServerName;

invoke-sqlcmd -ServerInstance $ServerName -Database “MSDB” -InputFile “\\Server01\F$\SQLServerDBA\Scripts\ServerConfig\RecycleSQLErrorLogJOB-SQL2K.sql” -SuppressProviderContextWarning

}

Now I’m going to explain what’s going on here and why:

1. $SourceSQLScript1 — This is the var that holds the location of the txt file where I store the query for the list of servers to run against. Inside the file looks like this: select ServerID as InstanceID, ServerName from dbo.Servers (nolock) where IsSQL = 1 and SQLVersion = 2000. The reason I do it this way is because if I ever need to change the query, all of the scripts that call the query will automatically pick up the change. So putting the query in a file is a good thing.

2. $SqlCmd1 — here’s where I’m actually running the query in the step above and setting the results to this var. Now I’ve got an array of ServerNames I can cycle through to run my script on.

3. [System.String]$ServerName = $_.ServerName; — Here I’m just setting the current cursor iteration of the ServerName equal to a var ($ServerName). It just makes it easier to read and if something comes up where I need to make a minor adjustment to how the ServerName looks, then I can mess with the var and not have to touch the rest of the script. Again, reducing effects to the script if I need to make changes to the value. So it’s like later on down the line if I discover that I need to put [] around it or something like that. This could also be done in the query file, but it’s nice to have the option here as well should I need something special for a single script.

4. $ServerName; — Here I’m just printing out the ServerName so I can watch my script cycle through the boxes. It’s my way of knowing what’s going on. I hate that blinking cursor.

5. invoke-sqlcmd — This is where the real magic happens. I’m connecting to each box and running the job script I saved above.

Here are some finer points of the logic for why I did things the way I did:

1. I put the server list query into a var. This is to allow you (or me) to get the server list any way we like w/o disturbing the rest of the query. You could have a hardcoded list of serverNames, or have them in a file… whatever you like. Here’s a really good example.

Instead of this:

$SqlCmd1 = Invoke-Sqlcmd -ServerInstance $Server -Database $StatsDB -inputfile $SourceSQLScript1

You can have this:

$SqlCmd1 = “Server1”, “Server2”, “Server3”, “Server4”, “Server5”

And the rest of the script stays the same because the cursor is run off of the var. It doesn’t care how that var gets populated.

2. I chose to run this separately for each edition of SQL. So I ran it once for SQL2K, once for SQL2K5, etc. That’s because like I said there are differences in the job scripts for the different versions. I could have done it all in a single script, but I really don’t like coding that logic in there and it increases the size of the script because now I have to check for the version of SQL and make a decision before I can create the job. I have to point it to the right .sql script. That’s too much work when all I have to do is change the query file and the script file between runs. And this way, I can run them concurrently for each version simply by saving the script under different names and kicking them off at the same time.

3. This one is my favorite because it’s what makes this so flexible. I chose to put the sql logic itself into a file and just call that file. The reason I did this is because not only is the sql logic too long for this type of script, but if I’ve got anything else I need to run against all my boxes, all I have to do is change the sql script I’m calling. I don’t have to make any changes at all to the rest of the script. So if I want to create or delete any specific users off of a group of boxes, all I have to do is put that code in a script and save it, and then point this script to it. Or anything else, right? This is really the bread and butter for powershell and multi-server mgmt.

So guys, take this boiler plate script and methodology and apply it to your own stuff. I’ve taken a lot of stuff out of the script for this blog because my scripts are part of a much larger solution I’ve developed and it’s not relevant to what you’ll be doing. But the script works as-is and you can add whatever you want to it. And now you’ve got a generic way to push T-SQL out to your boxes, and a generic way to populate the server list it goes against. What else do you need really?

Cool Powershell Scenario

here’s a cool scenario where you need to set all of your DBs to simple mode and then back again.

Let’s say that you have a bunch of DBs on your server that are all in full mode and you’re setting up new backup routines on the server and you want to start everything from scratch. So in this scenario you may want to switch all the DBs to simple mode to truncate the logs and then back to full again. This is to prevent a huge log backup the first time, and you may not even have a full backup file to go with it anymore so what would be the point?

So here’s some simple code you could run to make this happen very quickly and easily.

> dir | %{$_.set_RecoveryModel(3)} # Set recovery to simple to truncate the logs.

> dir | %{$_.set_RecoveryModel(1)} # Set recovery back to full.

Everything you do with powershell doesn’t have to cure cancer. It can just save you a couple mins or even a few mins of tedium. Writing really cool scripts to do big things is awesome, but most of the stuff you’re going to do is this adhoc kinda stuff. That’s the reason I love powershell so much, because you don’t have to do anything grandios with it. You can just make your day easier.

Poweshell wins again

It may seem a little redundant, but I love stuff like this. I was asked in front of a group of devs to script out a group of SPs on the prod box and copy them over to the new test box. These SPs stretch across a couple schemas and are named differently from the other ones in those schemas. As it turns out, there are something like 300 of them total. I don’t have a final count.

So when the guy asked me I said sure, that’ll take me like 60secs. And one of the other devs said, there’s no way. You have to check all of those boxes individually and and make sure you don’t miss anything. I said, of course I can. I’m a powershell guy (yes, i actually said that). He was like, even if you could script something like that out, there’s no way to easily get all the ones you need. You’ll be much faster in the wizard.

I told him, I accept your challenge. And for the first time, I gave a dev rights in prod and we had a face-off right there. We sat side by side and both of us started working feverishly to get our SPs scripted. Him in the wizard and me in powershell. Very quickly a crowd gathered. We prob had like 15-20 people gather. These were the PMs, other devs, report writers, etc. They all wanted to see if the bigshot DBA MVP could be taken down by a lowly dev.

Unfortunately like 90secs later, I completed my script and was building my file with my scripted SPs. He was still slugging his way through the wizard and wasn’t even close to having all his little boxes checked. When I finished, I just stood up and walked out as everyone clapped. When I left to come back upstairs he was still working at the wizard determinded to at least finish.

At least that’s how my powershell hero stories always play-out in my mind. I really did get that assignment today, but it was through email and I just did it without any pomp and circumstance. Oh well, a guy can dream can’t he?

Here’s the code I wrote today to pull SPs by schema and matching a pattern. I used the regex in powershell to make this happen. Enjoy.

PS SQLSERVER:\SQL\Server1\DEFAULT\Databases\ProdDB\StoredProcedures> dir | ?{$_.schema -eq “Bay” -or $_.schema -match “EBM”} | ?{$_.Name -match “Bay?_PR”} | %{$_.Script() | out-file C:\SPs.txt -append; “GO” | out-file C:\SPs.txt -append}

Maybe someday one of you will actually save the day with it.

How to Monitor SQL Services with Powershell

Here’s the situation…

You get a call from one of your customers saying that the log has filled up on the DB and they can’t do anything any more. So you connect to the server and find out that the log backups haven’t been running. So you run the backup and everything is hunkydory. But why did it fail to run in the first place? Well about 3secs of investigation tells you that the Agent was turned off. Ok, you turn it back on and go on about your business. But this isn’t the way to do things. You don’t want your customers informing you of important conditions on your DB servers. And you certainly don’t want Agent to be turned off.

And while there may be some other ways to monitor whether services are running or not, I’m going to talk about how to do it in PS. There are 2 ways to do this in PS… get-service and get-wmiobject. Let’s take a look at each one to see how they compare.

In the old days (about 2yrs ago), when all we had was the antiquated powershell v.1, you had to use get-wmiobject for this task because get-service didn’t allow you to hit remote boxes. All that’s changed now so you can easily run get-service against a remote box with the -computername parameter.

get-service -computername Server2

And of course it supports a comma-separated list like this:

get-service -computername Server2, Server3

And just for completeness here’s how you would sort it, because by default they’re going to be sorted by DisplayName so services from both boxes will be inter-mingled.

get-service -computername Server2, Server3 | sort -property MachineName | FT MachineName, DisplayName, Status

Ok, that was more than just sorting wasn’t it? I added a format-table (FT) with the columns I wanted to see. You have to put the MachineName there so you know which box you’re gong against, right? And the status is whether it’s running or not.

Remember though that I said we were going to do SQL services, and not all the services. So we still have to limit the query to give us only SQL services. This too can be done in 2 ways:

get-service -computername Server2, Server3 -include “*sql*” | sort -property MachineName | FT MachineName, DisplayName, Status

get-service -computername Server2, Server3 | ?{$_.DisplayName -match “sql”} | sort -property MachineName | FT MachineName, DisplayName, Status

so here I’ve used the -include and the where-object(?). They’ll both give you the same results, only the -include will filter the results on the remote server and the where-object will filter them on the client. So ultimately the -include will be more efficient because you don’t have to send all that extra text across the wire only to throw it away.

And of course, you don’t have to use that inline list to go against several boxes. In fact, I don’t even recommend it because it doesn’t scale. For purposes of this discussion I’ll put the servers in a txt file on C:. Here’s how you would do the same thing while reading the servers from a txt file, only this time you could very conveniently have as many servers in there as you like. And when creating the file, just put each server on a new line like this:

Server2

Server3

So here’s the same line above with the txt file instead:

get-content C:\Servers.txt | %{get-service -computername $_ -include “*sql*” | sort -property MachineName | FT MachineName, DisplayName, Status}

This is well documented so I’m not going to explain the foreach(%) to you.

Ok, so let’s move on to the next method because I think I’ve said all I need to say about get-service. But isn’t this just gold?

get-wmiobject

Earlier I was talking about what we did in the old days and I always used to recommend get-wmiobject because of the server limitation imposed on get-service. However, does that mean that get-wmiobject is completely interchangable with get-service now? Unfortunately not. I’m going to go ahead and cut to the chase here and say that you’ll still wanna use get-wmiobject for this task most of the time… if not all of the time, because why change methods?

You’ll notice one key difference between doing a gm against these 2 methods:

get-service | gm

get-wmiobject win32_service | gm

The get-wmiobject has more methods and more properties.

And the key property we’re interested in here is the StartMode.

If you’re going to monitor for services to see which ones are stopped, it’s a good idea to know if they’re supposed to be stopped. Or even which ones are set to Manual when they should be set to Automatic.

And for this reason I highly recommend using getwmiobject instead of get-service.

Here’s some sample code using the txt file again.

get-content C:\Servers.txt | %{get-wmiobject win32_service -computernatm $_ -filter “DisplayName like ‘%sql%’ “} | FT SystemName, DisplayName, State, StartMode -auto

Notice that the names of things change between methods too, so watch out for that. So like MachineName changes to SystemName. You’ll also notice that I didn’t provide you with a full working example of a complete script. That’ll be for another time perhaps. The script I use fits into an entire solution so it’s tough to give you just a single script w/o also giving you all the stuff that goes along with it. And that just gets out of the scope of a single blog post.

However, I’ll leave you with these parting pieces of advice when building your service monitor.

1. Instead of pulling the servers from a txt file, put them in a table somewhere so you can run all of your processes from that location.

2. Use get-wmiobject win32_service instead of get-service. It’s more flexible.

3. When you collect your data, just save it to a table somewhere instead of alerting on it right away. In other words, there should be a collection and a separate alerting mechanism.

*** Why you ask? Well I’m glad you asked, because not asking something that should be asked is like asking something that shouldn’t be asked but in reverse. Anyway though… I prefer to get a single alert on all my boxes at once instead of an alert for each box, or for each service. And that kind of grouping is much easier to do in T-SQL than in PS. Also, there may happen a time when a service is down for a reason and you don’t want to get alerts on it but you still want to get alerts on the rest of the environment. This is easier to do in T-SQL as well. And finally, you may want to also attempt to start the services that are down and that really should be a separate process so you can control it better. Or you may just want to be alerted and handle them manually. Again, what if the service is supposed to be down for some reason, you certainly don’t want the collection process going out and restarting it for you. And the collection can be a nice way to make sure you remember to turn the service back on when you’re done with whatever you were doing. You’ll get an alert saying it’s down, and you’ll be all like, oh y, I totally forgot to turn that back on and my backups aren’t kicking off. All the same, you really do want the collection, alerting, and action processes to be separated. But that’s just me, you do what you want. ***

4. Keep history of that status of the services. You can look back over the last few months and see which ones have given you the most trouble and you can then try to discover why. It’s good info to have and you may not realize how much time you’re spending on certain boxes until you see it written down like that.

How to save your life with powershell.

I’m always preaching the wonders of powershell, but I think quite often I forget to stress the impact that it can provide. And today is one of those times when I’m able to give you a real life impact of how PS can save your life… ok, your job anyway.

One of the things PS does really well is scripting DB objects. It’s always one of the first things I show that’s really useful in my classes and it always gets a appropriate amount of gasp. But other than creating dev systems from prod, what good is it? Well, it’s a fabulous way to script your prod environment on a regular basis just to have the scripts handy. If you’re able to use PS to easily script your tables, SPs, views, users, etc. then you’re going to have a history of your DB that you can pull from anytime. And if you’re smart enough to store those scripts in txt files with dates appended to them, then you can always get back a complete version of your DB for any given day. Cool huh?

And the implications of this are huge. Not only can you create a complete environment for any given day so that you can compare it with the current version, or any other version you like, but much more likely is the scenario where someone makes a mistake in prod and needs some small piece of code replaced. So someone drops an SP, or alters a view, or kills a job, or anything else along those lines. Of course, this won’t help you with data accidents, but imagine the possibility of being able to pull your butt out of the fire because you were able to recover from a mistake you made… or anyone else made.

This came not only in handy, but actually saved my ass at my last gig where I had these scripts running and accidentally dropped all the SPs in our prod DB. I could have sworn I was on dev, right? So I had my PS scripts to import the objects again too, so as soon as I realized my mistake I recreated all the SPs and all was well with only a minor hiccup to our reporting cycle. But that’s exactly what I’m talking about. If you have these things ready to go you’re ready for whatever kind of disaster comes up and if you not only have the scripted objects sitting there, but also the scripts to put them back, then you’re in the best shape you can possibly be in.

My favorite way to store these like I said is in txt files.

I put them in a folder named for the DB, then another subfolder after the object type, then I name the files by schema.objectname.txt. So a sample path to an object would look like this:

c:\scripts\DB1\SPs\dbo.SP120100714.txt

Now to parse the date back out is a simple matter and can be handled with a simple PS script. And the scripts you need to restore can be as easy or complicated as you like. You can have any or none of the following:

Restore all tables

Restore all indexes

Restore all SPs

Restore all views

Restore single SP

Restore single view

etc…

You could also combine them into a single PS script more or less that you just pass an object type to and it figures out the path and does the restore for you that way. Either way is good really.

So setup your PS scripts to script your DB objects and store them someplace. It’s only txt so you can keep them pretty far back if you like, and even archive them in zipped files if you like.

Good luck.

24-hrs of PASS is Online

If you haven’t gotten your email yet, or if it’s just gone into your spam, the 24-hrs of PASS recordings are ready.

You’ll find the main page here (it’ll make you login): http://www.sqlpass.org/LearningCenter/24Hours.aspx

And don’t forget to look specifically for my session here: http://passfiles.sqlpass.org/uploads/24Hours/05_20_2010_05AM_downloadHFP/Engine/Default.htm?http%3A%2F%2Fpassfiles.sqlpass.org%2Fuploads%2F24Hours%2F05_20_2010_05AM_downloadHFP%2F

If the link above doesn’t work then mine is Session #18.